The goal of the case study was to use the synthetic data to train some models to predict customer churn and to evaluate the performance of those trained models. The dataset contains 128 columns, including one column indicating whether a customer has left the company (i.e. This is especially devastating for AI tasks where “predictive power” is essential because bad quality data will result in bad insights from the AI model.įor the case study, the target dataset was a telecom dataset provided by SAS containing the data of 56.600 customers. They manipulate data and thereby destroy data in the process.

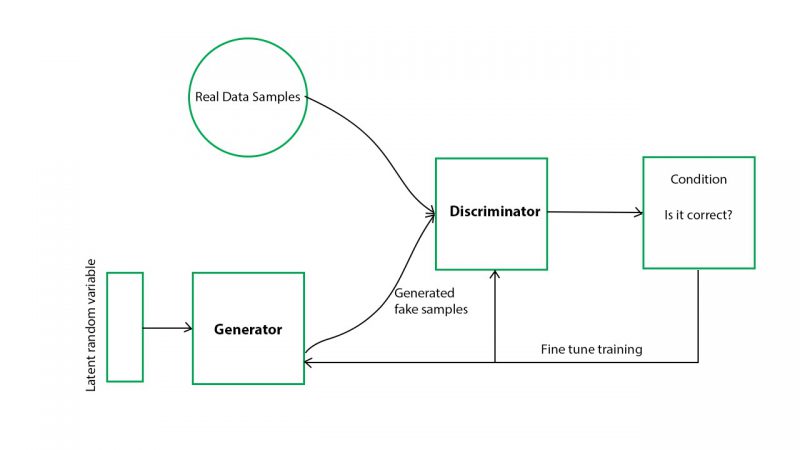

This means that there will always be a privacy risk, that is especially present due to all available techniques and open datasets. There is always a one-to-one relationship with the original data.Furthermore, since they work differently, there will always be debate about which methods to apply and what combination of techniques are needed. They work differently per data type and per dataset, making them hard to scale.Those techniques introduce 3 key challenges: You can find examples in the table below. Examples are generalization, suppression, wiping, pseudonymization, data masking and shuffling of rows and columns. Is data anonymization not a solution?Ĭlassic anonymization techniques have in common that they manipulate original data in order to hinder tracing back individuals. To answer these, Syntho started a case study together with SAS. In collaboration with the Dutch AI Coalition (NL AIC), they investigated the value of synthetic data by comparing AI-generated synthetic data generated by the Syntho Engine with original data via various assessments on data quality, legal validity and usability. However, synthetic data generation with AI is a relatively new solution that typically introduces frequently asked questions. They help organizations to build a strong data foundation with easy and fast access to high-quality data and recently won the Philips Innovation Award. Syntho, an expert in AI-generated synthetic data, aims to turn privacy by design into a competitive advantage with AI-generated synthetic data. This is what we call a synthetic data twin, where one mimics original sensitive data with an AI algorithm. It is new to apply AI in the data synthesis process to model the generated synthetic data in such a way that it mimics the characteristics, relationships and statistical patterns from the original data set. Where original data is collected through interactions with individuals, synthetic data is generated by an AI algorithm that generates completely new and artificial data points. What is AI-generated synthetic data exactly? Gartner predicts by 2024 that 60% of the data used to develop AI and analytics applications will be synthetically generated. However, the rapid availability of data is a challenge due to increasingly strict privacy regulations.Ī possible solution is to use synthetic data. Data is crucial for the development of artificial intelligence (AI) applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed